Continuous online learning

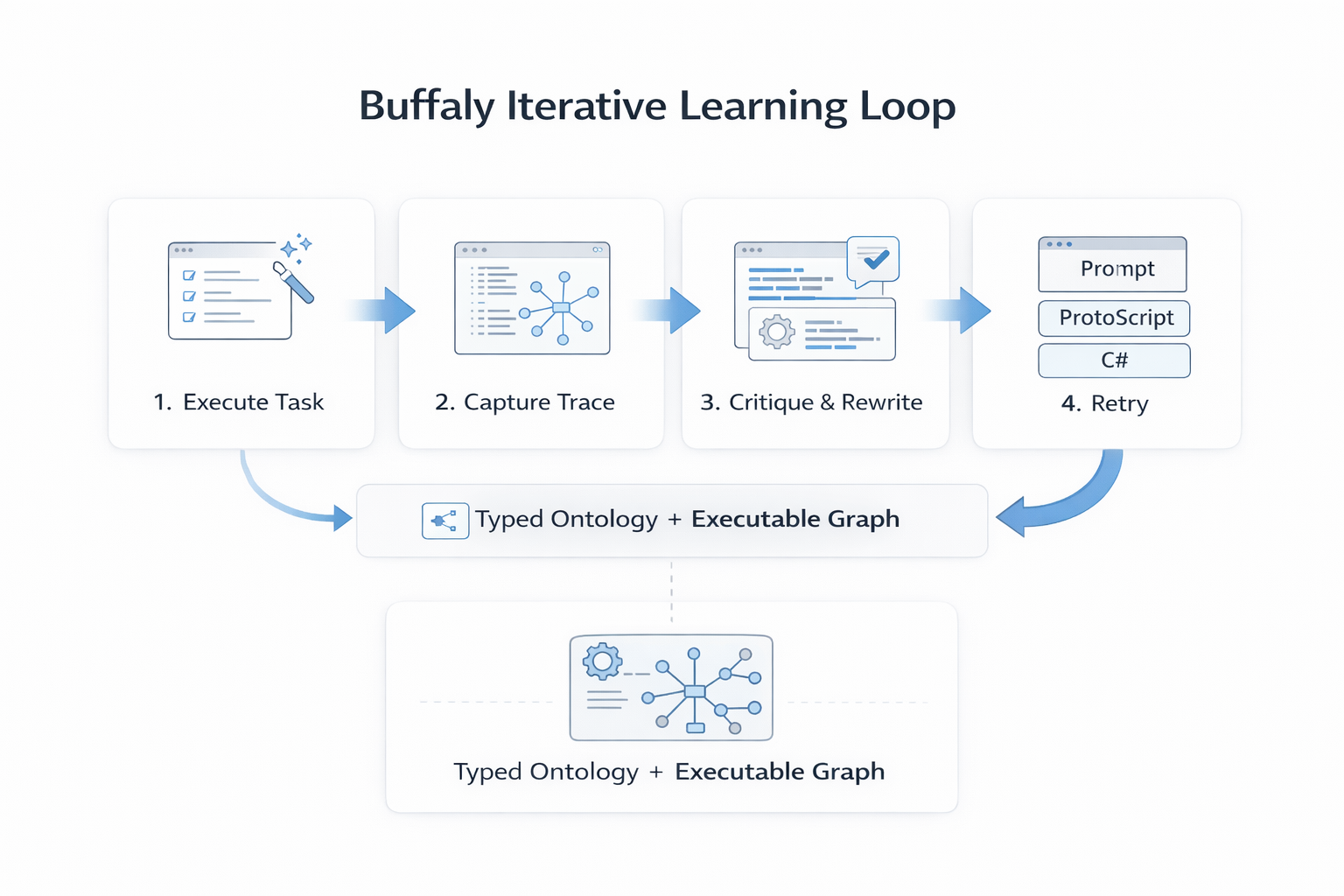

Buffaly learns from live outcomes and updates execution quality over time, rather than relying on static memory or replayed context.

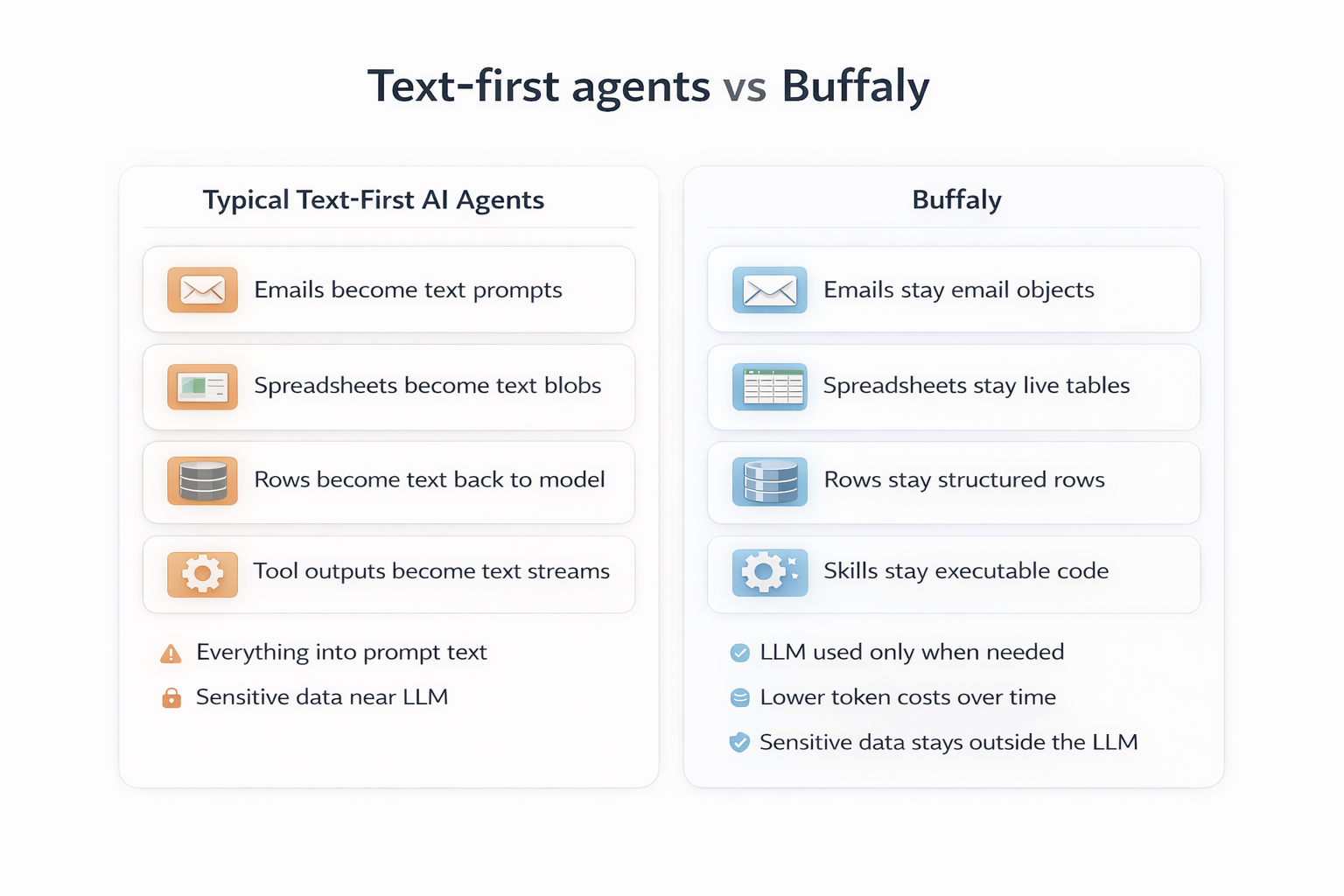

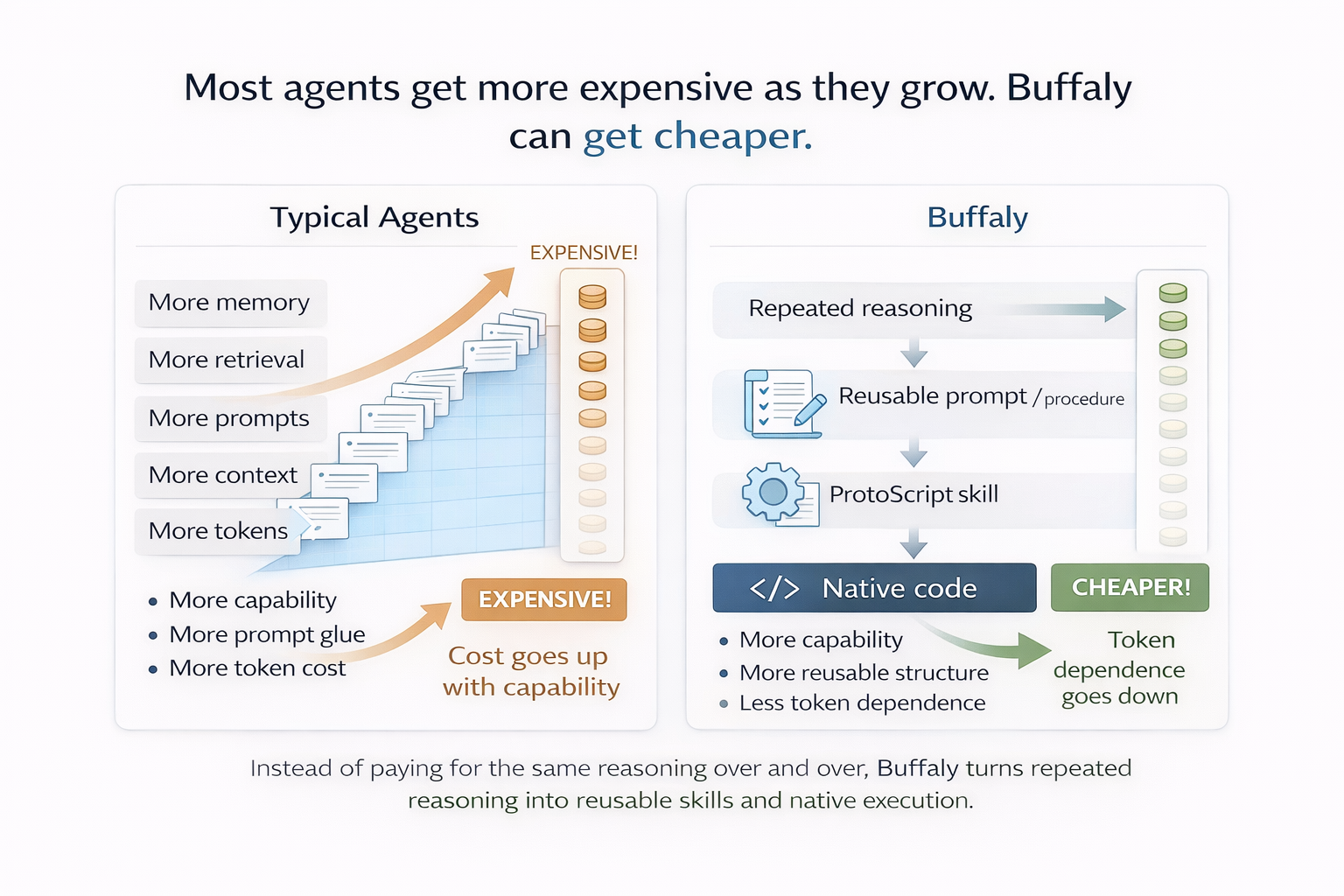

Most agent systems remain trapped in a text-first loop: convert reality into prompts, ask a model, then translate output back into action. Buffaly follows a different path. It learns online, rewrites runtime capability while operating, executes against native objects, and can drive token demand down as capability compounds.

Buffaly learns from live outcomes and updates execution quality over time, rather than relying on static memory or replayed context.

It can create, revise, and promote runtime tools and procedures while the system is actively running.

Critical execution stays typed and grounded in runtime objects instead of being flattened into prompt material.

As reasoning becomes reusable runtime capability, token-heavy orchestration can flatten or decline over time.

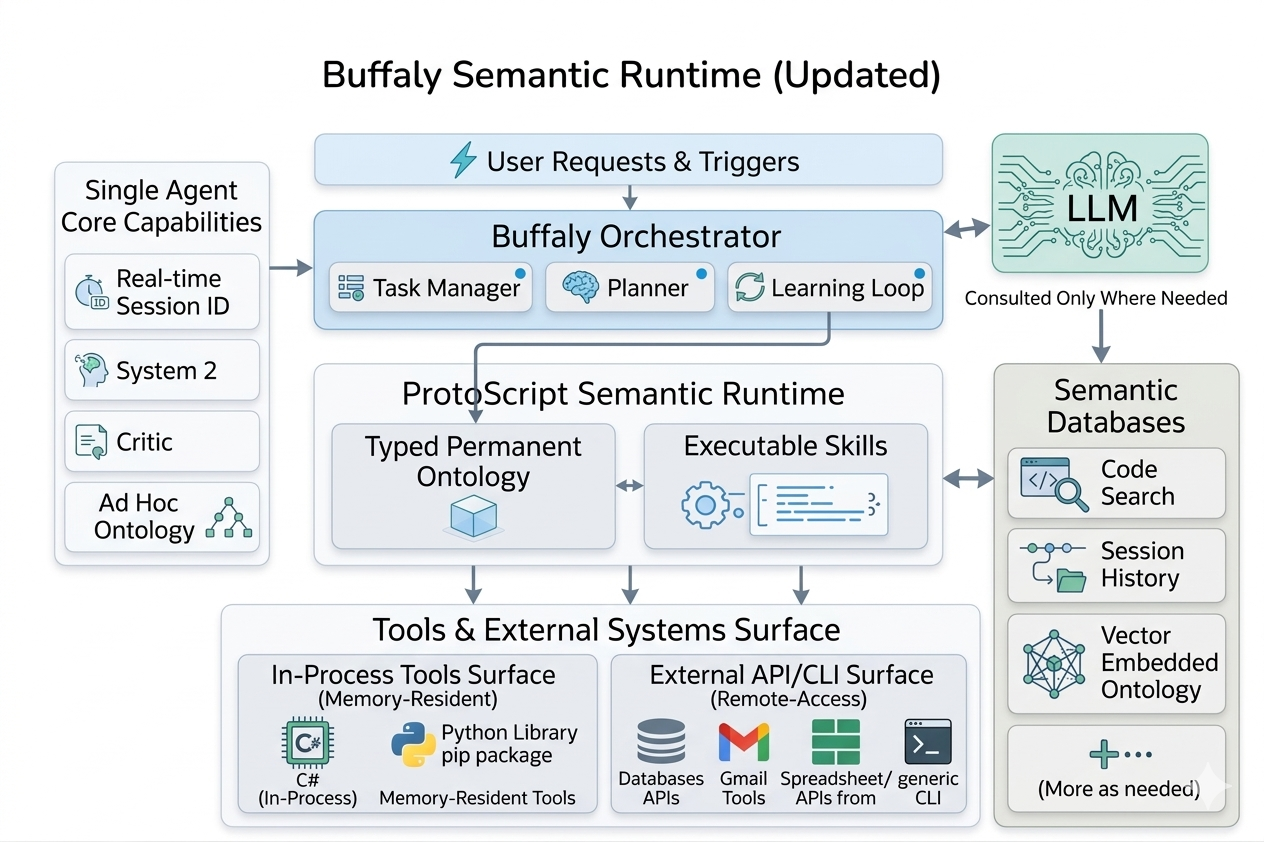

Buffaly keeps language, structure, and execution connected but distinct. The semantic runtime is the control layer: ontology, executable graph logic, and constrained execution surfaces work together so learning can be captured as durable system capability.

Buffaly does more than store history. It can detect what works in production, represent that learning in structure, and repeatedly turn successful patterns into tools and procedures that execute again under control. This creates compounding capability instead of one-off reasoning.

Runtime writing and rewriting is central to this loop. Buffaly can generate and update ProtoScript, revise existing runtime logic, and integrate native C# functionality while live. New understanding does not remain output text; it becomes executable behavior that can be reused and improved.

Over time, each successful execution has a path to become part of future execution quality, turning operations into a continuous learning pipeline.

Most agent stacks flatten files, records, state, and policies into prompt text before action. Buffaly can operate on typed entities, constrained actions, spreadsheets, databases, and private runtime state directly. More of the real system remains outside prompt space, which improves control, reliability, and operational clarity.

In practice, this means Buffaly does not need to serialize every decision boundary into tokens before it can act. It can reason with language where needed, but execute through native runtime structures where precision and safety matter most.

When the world is not universally flattened into text, hostile text has fewer universal paths to influence control flow. Buffaly reduces prompt-injection exposure structurally by keeping secrets, constraints, and executable actions in typed runtime layers outside prompt space.

Buffaly can get smarter and cheaper at the same time. As repeated reasoning is converted into ontology, constrained actions, procedures, ProtoScript, and native execution, less of the same work must be paid for repeatedly in prompt tokens.

This is not a one-time optimization; it is an architectural trajectory where intelligence compounds while prompt dependence can flatten or decline.

Buffaly is designed to become more capable as it runs while moving more execution into structured runtime layers. That combination changes the operating curve: stronger control, compounding intelligence, and better long-run economics from the same system.